Master web testing in 2026: boost conversions 20% faster

You've heard A/B testing can transform your conversion rates, but maybe it feels too technical or resource intensive for your small marketing team. The truth is simpler than you think. Modern web testing isn't reserved for enterprises with data science teams. With the right approach and tools, you can run meaningful experiments that directly impact revenue, even with limited traffic and zero coding skills. This guide cuts through the complexity to show you exactly how to launch, manage, and learn from web tests that actually move the needle for your business.

Table of Contents

- Understanding A/B Testing Mechanics: How Web Tests Really Work

- Avoiding Common A/B Testing Mistakes: Pitfalls And Nuances For SMB Marketers

- Choosing Your Web Testing Strategy: Frequentist Vs Bayesian And AI-Assisted Approaches

- Practical Web Testing For SMB Growth Hackers: Tools, Focus, And Iteration Tips

- Simplify Your Web Testing With GoStellar

Key takeaways

| Point | Details |

|---|---|

| Testing splits traffic | A/B testing mechanics randomly divide visitors between original and variant pages to measure conversion differences with 95% confidence. |

| Common mistakes inflate errors | Early peeking and multiple comparisons without corrections can increase false positives up to 64%, leading to wrong decisions. |

| Strategy depends on traffic | Low traffic SMBs benefit from sequential or Bayesian methods that allow safe early analysis without strict sample size requirements. |

| Most tests fail but teach | Only one third of experiments show positive lift, yet consistent iteration compounds learning and drives measurable growth over time. |

| No-code tools democratize testing | Platforms with visual editors let marketers launch experiments on high impact pages without developer support or technical barriers. |

Understanding A/B testing mechanics: how web tests really work

Before launching your first experiment, you need a clear hypothesis. This isn't just a hunch about what might work better. A proper hypothesis states what you're changing, why you believe it will improve conversions, and which metric will prove success. For example, "Changing the CTA button from green to red will increase sign-ups by 15% because red creates urgency."

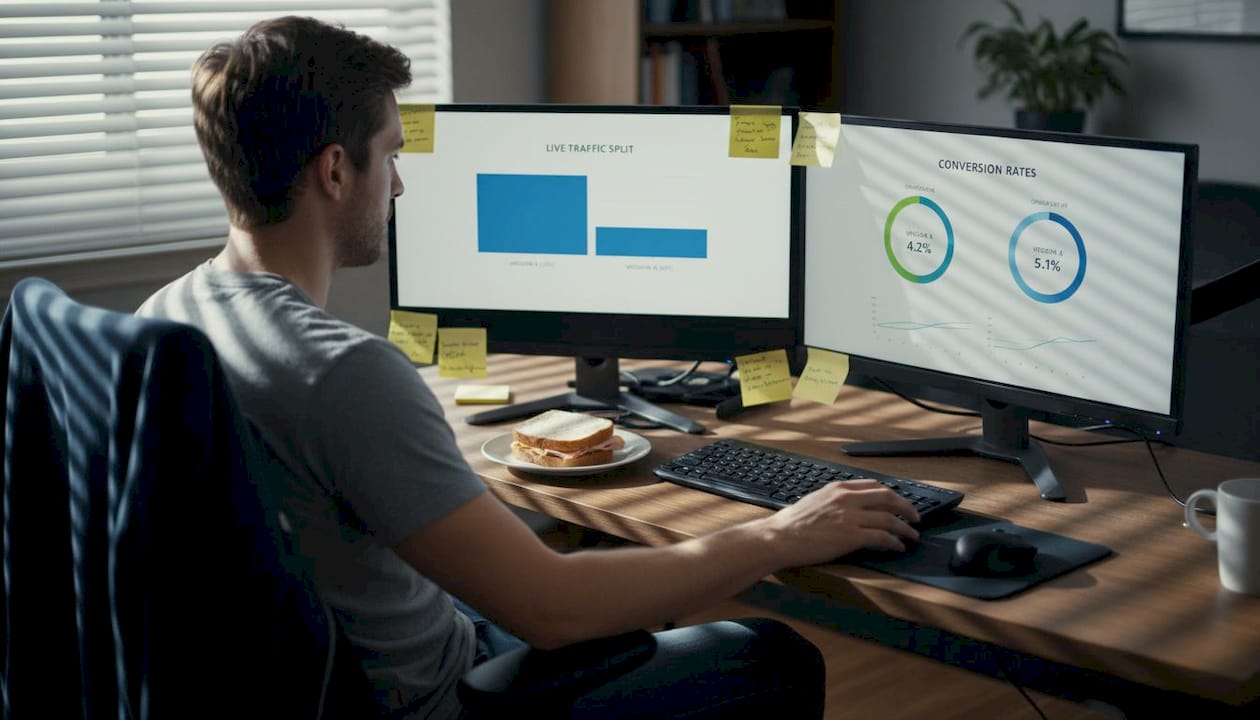

Once you've defined your hypothesis, A/B testing mechanics randomly split your website traffic between the original page (control A) and your new variation (B). This randomization ensures that external factors like time of day or traffic source affect both versions equally. Your testing platform tracks each visitor's behavior and records whether they complete your target action.

The experiment runs until you reach statistical significance at 95% confidence, which typically requires at least 3,000 visitors per variant to detect conversion lifts around 20%. Stopping too early, even if results look promising, dramatically increases your chances of declaring a false winner. You must resist the temptation to peek and make decisions before collecting adequate data.

After reaching significance, analyze your primary conversion metric that you chose before starting. This might be purchases, email sign-ups, demo requests, or revenue per visitor. Secondary metrics provide context but shouldn't override your predetermined success measure. If variant B shows a statistically significant improvement, you've found a winner worth implementing permanently.

Pro Tip: Document every test hypothesis, methodology, and result in a shared spreadsheet. This testing library becomes invaluable for spotting patterns, avoiding repeated experiments, and onboarding new team members to your optimization culture.

The A/B testing best practices framework ensures you're measuring real improvements rather than random noise. Small sample sizes or premature analysis can lead to costly mistakes where you implement changes that actually hurt conversions. Following proper mechanics protects your business from these expensive errors.

Avoiding common A/B testing mistakes: pitfalls and nuances for SMB marketers

Early peeking represents the most dangerous trap in web testing. When you check results before reaching your predetermined sample size, you're essentially running multiple tests and cherry picking the moment when results look best. Research shows this practice increases false positives up to 64%, meaning nearly two thirds of your "winning" variations might actually perform no better than the original.

Testing multiple metrics or page versions simultaneously creates similar problems. If you track ten different KPIs, pure chance suggests one will show significance even with no real effect. The Bonferroni correction divides your significance threshold by the number of comparisons, while the Benjamini-Hochberg method offers a less conservative approach that controls false discovery rates. Without these adjustments, you're likely implementing changes based on statistical flukes.

Low traffic websites face unique challenges that standard testing approaches don't address. If your pages receive only hundreds of visitors weekly, reaching 3,000 per variant could take months. Sequential and Bayesian methods solve this by allowing you to peek safely through continuous probability updates rather than fixed sample requirements. These approaches adapt to your traffic reality without sacrificing statistical validity.

"The biggest mistake I see is companies running tests without proper segmentation. What works for mobile users often fails on desktop, and combining them masks these critical differences."

Segment differences require separate analysis or dedicated tests. Mobile visitors behave differently than desktop users. New customers respond to different messaging than returning buyers. Running a single test across all segments might show no overall effect while hiding significant wins or losses within specific groups. Always consider whether your audience segments warrant individual attention.

External timing factors can completely invalidate your results. Running tests during holiday promotions, major sales events, or unusual traffic spikes introduces variables that have nothing to do with your page changes. A variant might appear to win simply because it ran during peak buying season. Schedule tests during typical business periods and avoid overlapping with known anomalies.

Pro Tip: Set calendar reminders for your planned test end dates based on traffic projections. This removes the temptation to check results early and helps you commit to proper sample sizes before launching experiments.

The A/B testing checklist 2025 walks through each potential pitfall with actionable prevention steps. Many SMB marketers lose thousands in revenue by implementing false winners or missing real opportunities hidden in noisy data. Understanding these nuances separates effective optimization from expensive guesswork.

Choosing your web testing strategy: frequentist vs Bayesian and AI-assisted approaches

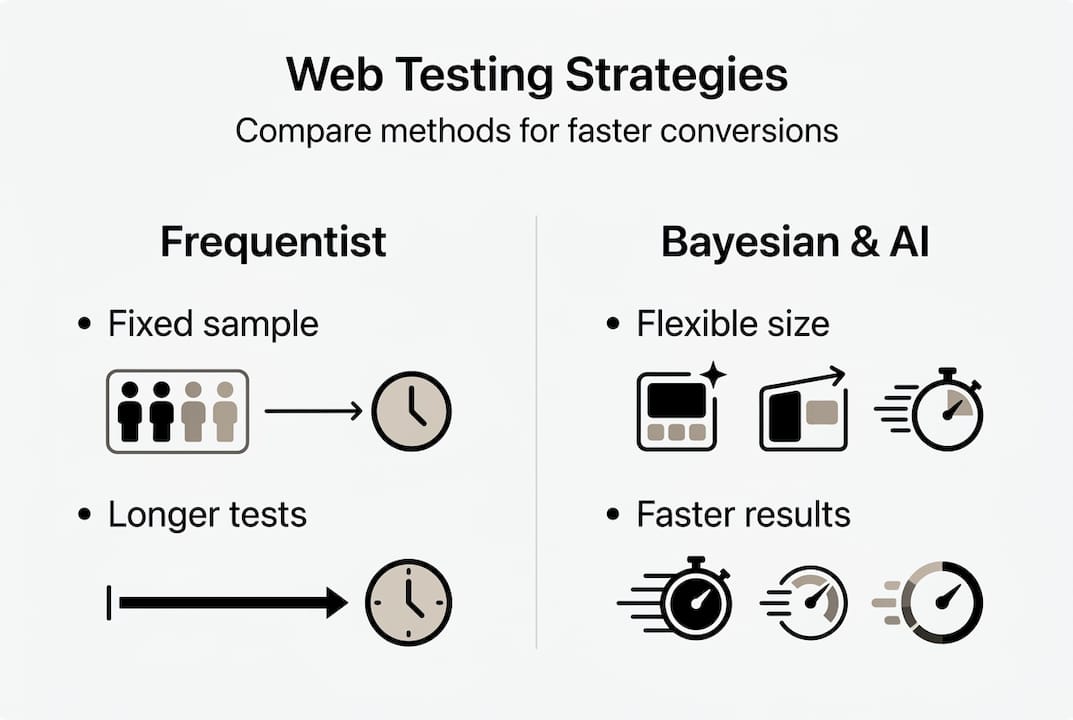

Frequentist testing represents the traditional approach most marketers learn first. You calculate required sample size upfront, collect exactly that much data without peeking, then analyze results once. This method provides clear yes or no answers with known error rates. The downside? Rigid requirements that don't flex for real world constraints like traffic fluctuations or business urgency.

Bayesian and sequential methods offer flexibility that suits SMB realities better. Instead of fixed sample sizes, these approaches update probability estimates continuously as data arrives. You can check results anytime and stop when confidence reaches acceptable levels. For low traffic websites, this means getting answers in weeks rather than months without sacrificing statistical rigor.

| Approach | Best For | Key Advantage | Main Drawback |

|---|---|---|---|

| Frequentist | High traffic, strict compliance needs | Industry standard, clear error rates | Inflexible, requires large samples |

| Bayesian | Low traffic, faster decisions | Safe peeking, incorporates prior knowledge | Less familiar, requires interpretation skills |

| Sequential | Variable traffic, adaptive testing | Stops early when clear, efficient | More complex setup |

| AI-Assisted | Complex tests, prediction needs | Forecasts outcomes, improves planning | Still emerging, requires quality data |

AI-based simulations represent the cutting edge of test design. Recent research shows AI can predict test outcomes with 67 to 83% accuracy compared to historical data. These tools analyze your past experiments, current traffic patterns, and proposed changes to forecast whether a test will likely succeed. This capability helps you prioritize experiments with higher win probability and avoid wasting time on low impact variations.

Only about one third of tests yield positive lift, but this doesn't mean two thirds are failures. Every experiment that doesn't improve conversions still teaches you something valuable about your audience. Maybe your hypothesis about color psychology was wrong, or perhaps your copy change addressed the wrong objection. These insights compound over time, sharpening your intuition and improving future test ideas.

Selecting your strategy depends on three factors: available traffic, resource constraints, and risk tolerance. High traffic sites with dedicated analysts might prefer frequentist rigor. SMBs with 5,000 monthly visitors and no data team benefit more from Bayesian flexibility. Companies testing critical changes with major revenue implications might run traditional fixed sample tests despite longer timelines. Match your methodology to your specific situation rather than following industry defaults.

The advanced A/B testing strategies guide explores these methodological choices in depth with decision frameworks. Understanding when to apply each approach transforms testing from a rigid checklist into a flexible optimization system that adapts to your business needs.

Practical web testing for SMB growth hackers: tools, focus, and iteration tips

Start every test with solid behavioral data, not random ideas. Install heatmapping tools to see where visitors actually click, how far they scroll, and which elements they ignore. Combine this with Google Analytics 4 to identify high exit pages, low performing CTAs, and conversion bottlenecks. These insights generate hypotheses grounded in real user behavior rather than assumptions about what should work.

Lightweight no-code platforms eliminate the technical barriers that previously restricted testing to companies with developer resources. Tools with visual editors let you modify headlines, images, buttons, and layouts through point and click interfaces. The best options add minimal page load impact, typically under 10KB, so your experiments don't hurt the performance metrics you're trying to improve.

For SMB marketers with low traffic, sequential testing methods prevent the months long waits that frequentist approaches require. These adaptive frameworks let you:

- Start tests immediately without complex sample size calculations

- Monitor progress safely without inflating false positive rates

- Stop experiments early when results become conclusive

- Redirect traffic to winners faster, maximizing revenue impact

Focus your limited testing resources on pages and elements with maximum leverage. Your homepage, primary landing pages, and checkout flow deserve attention before obscure blog posts or footer links. Similarly, test your main call to action buttons, value proposition headlines, and key conversion form fields. A 20% improvement on a page that drives 40% of revenue beats a 50% lift on something that generates 2% of conversions.

Expect most experiments to show no significant difference or even decrease conversions. Typical failure rates run 80 to 90%, and testing mistakes have cost companies over €180,000 in lost revenue. This reality makes iteration essential rather than optional. Run one test monthly, learn from each result, and apply those lessons to the next experiment. Twelve tests yearly, even with mostly neutral results, compound into meaningful conversion improvements.

Aim for achievable lifts rather than home runs. Changing button copy from "Submit" to "Get My Free Guide" might boost conversions 15%. Repositioning testimonials above the fold could add another 10%. Moving your CTA higher on mobile might contribute 12% more. These modest improvements multiply across your funnel, and they're far more realistic than expecting a single test to double your conversion rate.

Pro Tip: Create a testing roadmap that prioritizes experiments by potential impact multiplied by ease of implementation. Quick wins on high traffic pages should go first, while complex multivariate tests on niche segments can wait until you've built testing momentum and confidence.

The no-code marketing solutions ecosystem has matured dramatically, putting sophisticated optimization capabilities in reach of any marketer willing to learn. You don't need a statistics degree or engineering team to run professional grade experiments that drive real business results.

Before launching any test, validate your hypothesis using the framework in how to validate marketing ideas. This systematic approach ensures you're testing ideas with genuine potential rather than burning through experiments on low probability variations.

Simplify your web testing with GoStellar

You've learned the mechanics, avoided the pitfalls, chosen your strategy, and mapped your practical approach. Now you need a platform that makes implementation effortless rather than overwhelming. GoStellar delivers exactly what SMB marketers need without the complexity that bogs down enterprise solutions.

Our no-code visual editor means you're running your first test within minutes, not weeks of developer coordination. The 5.4KB script ensures your experiments don't slow down the pages you're trying to optimize. Sequential testing support lets you work with real world traffic constraints instead of waiting months for statistical significance. Dynamic keyword insertion personalizes landing pages automatically, while advanced goal tracking captures the conversion metrics that matter to your business. Whether you're testing headlines, layouts, or entire page redesigns, GoStellar provides the speed and simplicity that turns testing from a technical challenge into a growth advantage.

Frequently asked questions

What is web testing and why should SMBs use it?

Web testing systematically compares different versions of webpages to identify which drives better conversion rates, sign-ups, or revenue. SMBs benefit because even small traffic volumes can yield significant revenue improvements when you optimize key pages. Testing validates marketing hypotheses with data rather than opinions, reducing expensive mistakes.

How much traffic do I need to run A/B tests effectively?

Traditional frequentist tests require at least 3,000 visitors per variant to detect typical 20% conversion lifts reliably. However, sequential and Bayesian methods work with much lower traffic by allowing continuous analysis and early stopping. Sites with just 1,000 monthly visitors can still run meaningful tests using adaptive approaches.

What should I test first on my website?

Start with your highest traffic pages and most prominent conversion elements. Test your main call to action button copy, headline value propositions, or hero section layouts. These high leverage areas deliver maximum impact from successful experiments. Avoid testing obscure pages or minor elements until you've optimized primary conversion paths.

How long should I run an A/B test before making decisions?

Run tests until reaching predetermined statistical significance, typically 95% confidence, regardless of how long it takes. Stopping early when results look good dramatically increases false positives. For standard tests, this usually means 2 to 4 weeks depending on traffic. Sequential methods can conclude faster when results become definitive.

What if my A/B test shows no significant difference?

No significant difference means your variation didn't improve conversions enough to justify implementation. This counts as valuable learning, not failure. Document why you hypothesized the change would work, analyze what the results suggest about user behavior, and use these insights to design better next tests. Most experiments show neutral results.

Can I test multiple page elements simultaneously?

Testing multiple elements requires either multivariate testing with much higher traffic requirements or sequential A/B tests of individual changes. Changing several elements at once without proper methodology makes it impossible to identify which change drove results. Start with single element tests until you've built experience and have sufficient traffic for complex experiments.

Recommended

- Web Based Testing Strategies for CRO and Growth Teams 2025

- Web Application Performance Testing: 2025 Guide for CRO & Growth Marketers

- Fast Website Testing Strategies for Higher Conversions

- Webanwendungen beschleunigen: Der große Performance-Test 2025 für Conversion-Optimierung & digitales Wachstum

- Importanza del web design per il successo online nel 2026

Published: 3/19/2026